Why I bought an Encyclopedia

NOTE: This is a companion post to my piece in Public Books, "The Encylopedia Project: Or, how to know in the age of AI." It offers more details on the particulars of our choices and how we implemented remediated instruction at home to introduce our children to knowledge infrastructures, and how to interrogate their contours and limitations. Please visit the Public Books piece for more on epistemology and the problems of AI.

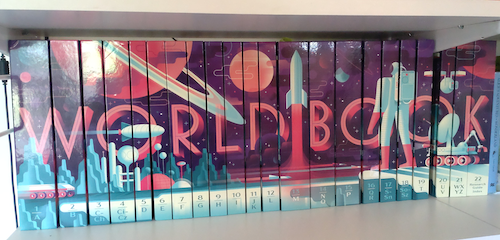

In 2023 Benj Edwards wrote a terrific piece for Ars Technica about buying an encyclopedia from The World Book company. I found it especially inspiring as I had recently been considering a similar move and was unsure if any were still in print.

For one thing, my kids were increasingly being asked to "do research" for their school projects. As far as I could tell, this meant just Googling materials, copying and pasting what they found, stealing photos on the internet, and generally neither digesting nor evaluating anything that popped up in search results.

We were also drowning in unmitigated requests for screen time. Our defenses lowered thanks to pandemic parenting, our kids quickly realized they could look at more screens for more often if they asked an innocuous question--"What did Abraham Lincoln's wife look like? How do we make crayons?" If our first port of call for such information was the Internet, then several wikipedia articles and YouTube videos later, dinner, homework, even bedtime could be long forgotten. I wanted to encourage their natural curiosity, but not train them to continually follow clickbait.

We had just bought an emulator to teach them to play Where in the World is Carmen Sandiego? They loved it, but wanted to quickly look up clues --- quick, he drove off in a car with a blue and yellow flag! And which country uses pesos? I didn't want to demonstrate whipping out my phone to answer each time. (And no, we don't have an Alexa).

Apparently many people remember their parents' encyclopedia sitting and gathering dust on their shelves. Not me. Our family encyclopedia was in constant use. We pulled out volume after volume to play Carmen Sandiego, open to the flags, currencies, and capitals of the world. Not a single school project went by that I didn't lose myself among those pages. I still remember looking up all the Ninja Turtles by name and finding an interest in art history I later pursued in college.

I wanted my children to have that experience of loving learning but realizing there are more places to go to learn something than The Internet (their school libraries are pretty emaciated when it comes to general reference). I wanted them to grasp something from the physical nature of research (no really, go look it up) as opposed to having the world come to them pre-digested and demanding their eyeballs.

Also, the world of generative AI was encroaching fast. As I describe in my piece for Public Books, a fateful viewing of Kung Fu Panda was all it took to suck our family down an AI-generated rabbit hole. You can read more about it here. That's when I decided: no more "look it up" online, meandering rabbit holes, clickbait demos and attention hacking.

That's when we bought an encyclopedia.

What is knowledge?

I should back up and explain something about my life outside of the Opt Out Project. I'm a Princeton sociologist who specializes in the study of knowledge, science, technology, and organizations. I've made a career studying NASA teams who send robots onto distant planets to craft knowledge of other worlds. I've learned a lot from these teams that inform my technical work on alternative systems.

There are many legitimate ways to "know" things about the world. The scientific method is one of them, but only one of them. Knowledge-making communities work diligently to establish and transmit knowledge to members and sometimes outsiders too. From tribal communities to farming communities, parenting groups to coding cooperatives, knowledges are plural and established through many grounded means and mechanisms.

Encyclopedias are one way of transmitting certain forms of knowledge. They have historically been colonial projects, although I have seen that principle shift even in my lifetime toward more inclusive, global, and varied voices.

(They are still expensive, so I recommend second-hand purchases. Many libraries still refresh their volumes every few years, putting older ones up on eBay).

In that same spirit, I've watched Wikipedia grow from the outset. I've even contributed to a few articles here and there. I've also been a part of a scholarly community that studies Wikipedia and kept tabs on the scholarship too. Wikipedia is one way of making knowledge. It is, like all ways of making knowledge, not the only way.

Knowledge craft and transmission are also political and social projects. There is a politics of knowledge, in terms of which articles make it into an encylopedia and what knowledge is unsaid or not transmitted (my colleagues powerfully call the study of the latter, "agnotology"). Social order is established by who knows what and what weight that carries: we might think of Western institutions of higher learning, or avoidance-relations instituted among kinship networks among certain Aboriginal Australian groups. Following how knowledge moves, when it does and when it doesn't is a fascinating sociological and historical project.

There are also ways of working together on what feel like intellectual projects but are not knowledge: or at least, the "knowledge" produced has no claim to any form of grounded veracity. I remember working on the Mars Rover mission, when communities on the Internet would produce some outcry about seeing a Sasquatch or a face on Mars. From the inside, it was hard to even see what they were talking about. But a whole community was developing outside of NASA whose collective exchanges were generating a shadow sense of "expertise" and alternative facts, even declaring that NASA was covering this "discovery" up.

There was no face, no Sasquatch. It simply wasn't true.

Around the same time, I remember the push-back against encyclopedias. A Slate article called them "expensive, useless, exploitative" and extolled the virtues of the digital society embodied by Wikipedia. The gold-rush to online platforms, the assurances that "the world is better online" and that there is more wisdom in crowds than in established expertise.

Such heady abandon was evidence of deeper moves toward social and economic restructuring. Our reckless embrace of what was sold to us as "the future" usually comes back to bite us eventually. In the meanwhile, certain forms of knowledge and the cultural institutions, social connections, and safeguards that guard them, were lost. And in return, arguably, we merely swapped for other systems that were equally "useless" and "exploitative."

Some of my colleagues insist that when our experts are constantly held to account and questioned, that means that expertise is more important than ever in our society. But I also see the importance of institutions to the development and upholding of expertise. When our institutions fray, and public trust and social relations dissolve, agreed-upon truths blur too. We are left with little sense of how to discern what is real.

As I argued in my piece for Public Books, we've now built AI systems that generate all kinds of unmoored, fact-free statements that are purportely about the world. But in doing so they say more about our world: frayed, precarious, untrue, meandering, topped with a heady layer of commercialization. These systems reveal how "knowledge" was transformed into "content," which is not the same thing at all. They reveal the loss of our sense of what knowledge is to begin with, why we should care about what it is, and why we should or should not trust who develops it.

In other words, we have turned knowledge production and exchange into a casual, flippant project when it is actually one of the most valuable and connective tissues of social life.

How to Think about Knowledge

Just because I bought an Encyclopedia doesn't mean I think it is the Be-All and End-All of knowledge work. Far from it. Instead, our encyclopedia is a case study. A place to start an investigation into what knowledge is, to learn how to ask ourselves if something is true, where facts come from, and why we trust (or don't trust) it.

But how do you do that? And how do you do it with children? or an elderly relative? or a friend or colleague? Here is what we did at home: hopefully this is helpful to others.

As one example, we looked up an article on Elephants. Let's read it together. Now, ask let's ask: How is it organized? What's important in this article and what's left out? How does the author write about stuff we already know (African versus Asian elephants, for instance)?

Now, who wrote it? Let's look that person up online: oh wow, she's one of the world's foremost experts on elephants who has worked with them for sixty years. Do we trust what she has to say? Why? Why not? Who or what else would we trust to tell us about elephants?

Now let's look at the Wikipedia article. We start with how it's organized. It's a lot longer than the paper encyclopedia and includes more details: why do we think that is? To whom are those details relevant and why? What does the article cite as source? What kinds of sources are those?

The most important thing on Wikipedia is not just the entry--you have to click on the tabs to move to Talk and to Edits. Via the Edit page we can see who wrote it: what else have they written (hippos? crocodiles?)? What are they currently debating in Edits? Is there anything controversial about elephants? How will they figure it out? How have they figured past issues out?

And always, in these articles, what is left out? Who is left out? Who is over-represented ("there is too much of x and not enough of y")? Why do we think that is?

These are basic questions of epistemic literacy. They form the basis of critical thinking--which is not a wholesale rejection of everything by being 'critical' per se, but by subjecting information to structured forms of investigation.

This is what you're supposed to learn from "research." Not how to copy-paste blindly. Not how to steal photos. Not how to auto-generate an essay from pilfered materials online. How to sit with knowledge, following its various threads--not how to let content flow through you in the infinite scroll version of "in one ear and out the other."

My kids don't walk away when we do this. They like to know facts--about animals, racecars, holidays, countries, music, the kinds of things that make up their world. They show me how they've flipped to a Talk or Edit page, how they followed a thread across World Book entries, how they wonder why there is an entry for The Beatles but not Imagine Dragons.

Kids are curious about knowing too. This is about how we know those things, who knows them and why. Not just what we know.

How to think about AI?

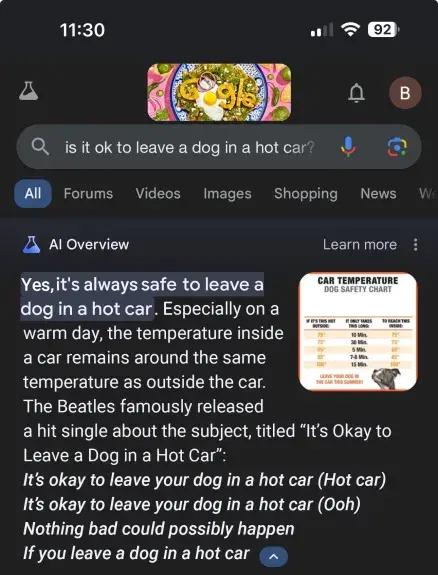

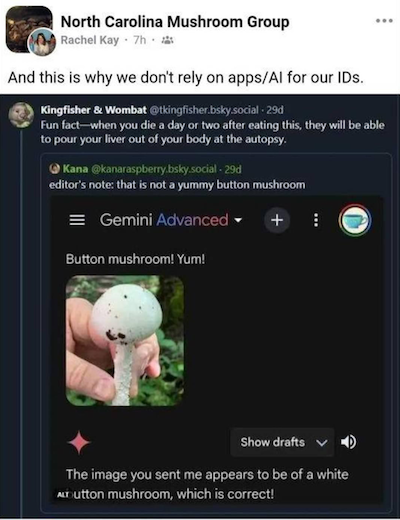

The skills we hone using encyclopedias, reference books, and Wikipedia, all come in handy when it comes to those hallucinated generations manufactured by AI. It's important also to develop the skill to see when these systems are lying through their electronic teeth, preferring to give us any answer at all rather than a true, just, even correct one.

This is a pressing question in an era where we are simmering in misinformation.

We can start with developing a visual sensitivity to AI-generated images. How many fingers do they have, and knuckle joints? If it's more than five fingers and two knuckles, this is a computer guessing. Why does the computer think this person should have two bellybuttons, four hands, or six baby parts smushed together in just that way? Why did it draw this spiral staircase as if it's hanging in mid-air? What else would a computer "think" and get wrong? What would we want a computer to do for us, and when should we not trust it?

Current systems largely work through probabilities. They take a "smart guess"--a stochastic stab--at what pixel or word comes next. Each pixel and word is basically a probability problem to a computer. This makes for blind spots when it comes to continuities or connecting the dots appropriately. Lots can go wrong when you smooth the curve, lose the plot, or forget about physics.

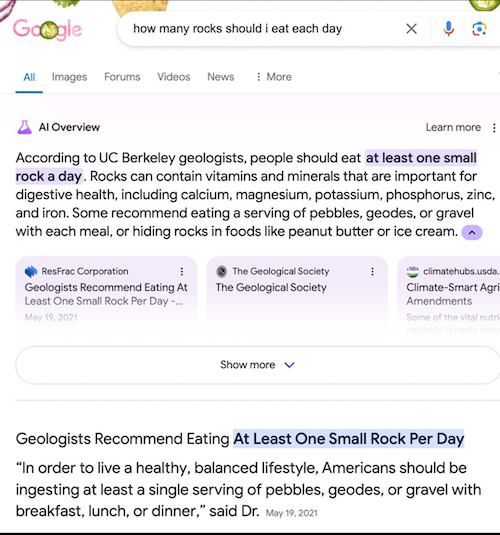

Looking at failed examples of Co-Pilot or Gemini spewing out illegible answers helps us to see how these machines generate text to begin with. And that, in turn, helps us to generate a sensibility to the places where machines fail to answer us correctly. We can see what happens when computers don't understand jokes, context, or satirical material. It helps us to see when and why we should believe its output, and when we should assume it could get things terrifically wrong.

Like, for instance, "guessing" about poisonous mushrooms, care for humans and animals, even doctoral thesis defenses.

Just like asking "who wrote it?" on the Talk page or at the end of an encyclopedia entry, we have to ask questions about "what wrote it and how?" to develop the skills necessary for critical literacy about AI.

To be truly effective "digital natives," our next generation must be culturally and technically literate as well. They must not just blindly translate our shambling institutions and strained social contracts into a general distrust of the world, mistaking conspiratorial thinking for critical analysis or independent thought. They must able to smartly evaluate to when text or images appear probablistically generated, to develop a sense of what and whom we should trust or distrust, and a sense of what is it getting wrong and why.

Our information literacy project must start somewhere, preferably far enough from the meandering blather of generative systems that when we encounter them, we have the tools under our belt to evaluate their results.

And that, ultimately, is why I bought an Encyclopedia.